Lifting the Hood on Cisco Software Defined Access

If you're an IT professional and you have at least a minimal awareness of what Cisco is doing in the market and you don't live under a rock, you would've heard about the major launch that took place in June: "The network. Intuitive." The anchor solution to this launch is Cisco's Software Defined Access (SDA) in which the campus network becomes automated, highly secure, and highly scalable.

The launch of SDA is what's called a "Tier 1" launch where Cisco's corporate marketing muscle is fully exercised in order to generate as much attention and interest as possible. As a result, there's a lot of good high-level material floating around right now around SDA. What I'm going to do in this post is lift the hood on the solution and explain what makes the SDA network fabric actually work.

SDA's (Technical) Benefits⌗

Let's examine the benefits of SDA through a technical lens (putting aside the business benefits we've been hearing about since the launch).

- Eliminates STP (!!). How many years have we been hearing about this in the data center?? Now the same is true in the campus network as well. STP can finally be left in the dust.

- Network convergence is driven by a routing protocol, not by STP. Within the underlay of the SDA fabric, an Interior Gateway Protocol (IGP) is used in the control plane. The IGP is responsible for building the network topology and finding a way around link or node failures. Convergence is now fast and safe.

- All links are forwarding. Because the underlay is relying on Layer 3 forwarding and an IGP, the network can make use of Equal Cost Multi-Path (ECMP) where all links are forwarding at all times. This maximizes bandwidth between any two points in the fabric and puts all of the network to work (no links are sitting idle).

- Only the network elements at the edge of the fabric need to be fabric aware. Network elements in the "middle" of the fabric are simply IP forwarders. These devices only need to run the underlay's IGP and never participate in the SDA control, data, or policy plane operations.

- Policy enforcement is possible both between and within VLANs. SDA enables true micro-segmentation within the campus network with policy being applied at a {user,device} level of granularity. Policy is no longer tied to an IP address or subnet, but can be tied to the identity of the user who's logged into the device and even the type of device itself (think: corporate device vs. a personal device).

- Host mobility from anywhere to anywhere in the fabric. A host-whether wired or wireless-can move about the network, whether to another room, another floor, or another building, without their IP address changing. All of the user's sessions can persist throughout the move and of course, the security policy assigned to that {user,device} follow along too.

- IP subnetting becomes far simpler. Because the fabric enables host mobility (and does so without stretching VLANs) and because of the micro-segmentation capabilities of the fabric, there's no longer a need to carve up the address space based on security policy; security policy is enforced regardless of the IP address of the source or destination host. As a result, IP subnets becomes very simple and very flat. It could be as simple as using a single large subnet like a /16 for the whole campus. (I know, this goes against everything we've learned as network engineers and will take a lot of getting used to ?)

Let's look now at the three major building blocks that make up SDA: the control plane, the data plane and the policy plane.

Control Plane⌗

The SDA control plane is the Locator/ID Separation Protocol (LISP). This is the same protocol that's been used and discussed in data center networks and the same protocol that I've written about before.

LISP's major role in SDA is to provide a highly scalable routing architecture that allows the network to track the point of attachment on the network for every end device. LISP's conversational learning behavior allows fabric nodes to learn the topology information that's relevant just to them vs having to learn and store the entire fabric's topology at all times. Think about it this way: the SDA fabric is tracking endpoints, i.e., it's tracking host routes. If every fabric node had to learn a host route for every device plugged into the network, an SDA fabric would have very poor scaling properties. LISP neatly and efficiently addresses this challenge.

LISP also enables the host mobility capability in the network and allows a host to move around the network, possibly attaching to different edge switches or different access points, without having to release/renew its IP address. LISP enables the network to track the host's point of attachment on the network so that the network knows where to send packets in order to reach the host at all times.

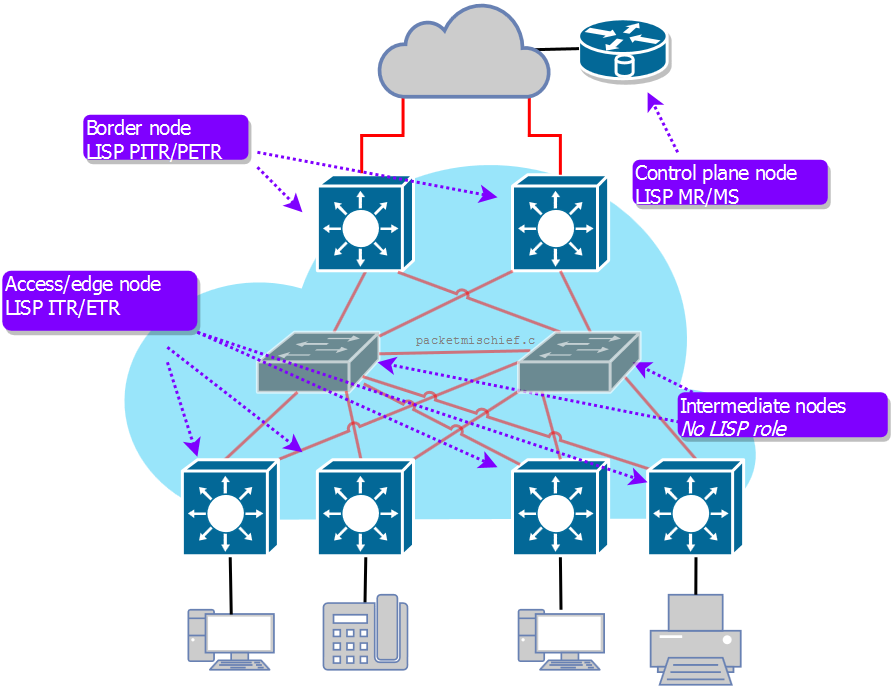

The network elements (NEs) that sit at the edge of the SDA fabric take on one or more roles in the network that correspond to one or more LISP functions.

- SDA access/edge node: This NE provides access to the SDA network for end devices such as computers, printers, phones, and so on. This NE functions as a LISP Ingress Tunnel Router (ITR) and Egress Tunnel Router (ETR) which means it is responsible for encapsulating traffic that enters the fabric (ITR function) and decapsulating traffic that's leaving the fabric towards an end device (ETR function).

- SDA border node: A border node connects the SDA fabric to some non-fabric network or possibly to a different SDA fabric; the border node provides a path in and out of the fabric. This NE functions as a LISP Proxy Ingress Tunnel Router (PITR) and Proxy Egress Tunnel Router (PETR) which means that this NE is seen as a "path of last resort". When an edge node (aka, an ITR) needs to send a packet to a destination outside of the fabric, the LISP control plane will not have any direct knowledge of how to get there. In that case, the edge/ITR will shoot the traffic to the PETR in order to get the traffic out of the fabric.

- Control plane node: In the LISP architecture, a control plane node functions as either a Map Server (MS) or a Map Resolver (MR) and in the SDA solution, those two functions are always co-resident on the same control plane node. The control plane node holds the LISP endpoint database which associates an endpoint (which is either an IP or MAC address) to its locator (which is the edge switch or access point the endpoint is attached to). The control plane node also acts as a resolver meaning that when an SDA NE needs to do a lookup to find out where a particular endpoint is attached to the network, it will query the control plane node for the answer. The control plane function can exist on a separate NE (eg, a CSR 1000V) or can be co-resident on a border node.

- Intermediate node: An intermediate node is simply a Layer 3 forwarder that sits in the network between fabric-enabled nodes. These nodes do not participate in the SDA control or data planes; instead they simply route traffic between the fabric nodes.

As I'll mention in the data plane section, the network does use Virtual Routing and Forwarding (VRF) instances in order to create a layer of macro-segmentation and as such, LISP is also VRF-aware and will do lookups and store answers in the appropriate VRF for the endpoint.

Data Plane⌗

The SDA data plane is Virtual eXtensible LAN (VXLAN) and again, just like LISP, is going to be familiar with anyone who's done any data center work in the last number of years. It's also a topic I've written about before.

Just like in the data center, VXLAN is used to eliminate Layer 2 links/trunks in the network while preserving Layer 2 semantics between endpoints within the same VLAN. VXLAN encapsulates the original Layer 2 packet into a new Layer 3 packet and routes it across the fabric to the destination ETR. This encapsulation of the original packet is what creates the overlay network.

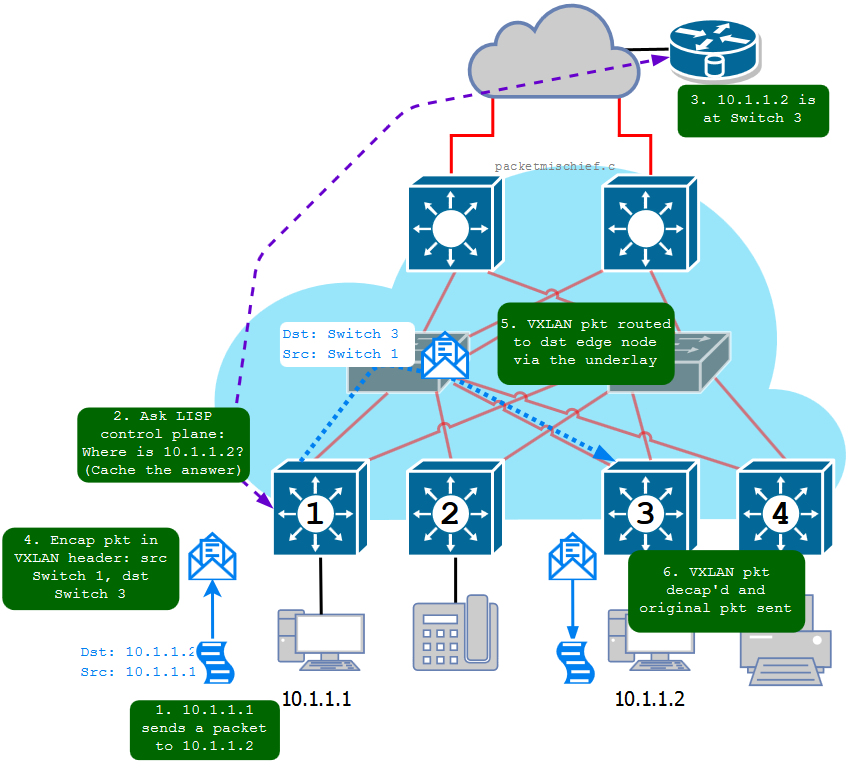

When a packet enters the fabric on an edge NE, the NE does a lookup using LISP to determine where the destination IP address lives in the fabric (or to see if it lives outside of the fabric). The result of this lookup is what's called a locator which is really the loopback IP address of the (P)ETR where the destination IP is currently attached. This NE now needs to get this packet to that specific egress NE so it builds a VXLAN header, puts that on the front of the original packet, and then puts a new IP header in front of that with a destination IP of the egress NE's loopback and a source IP of its own loopback. This new, encapsulated packet is sent into the underlay and is routed to the egress NE where it's decapsulated and the original packet is forwarded onward.

I mentioned above that VRFs are used for macro-segmentation. An example use-case would be to create two logical networks across the fabric: one for regular corporate users and devices and one for the building management system (HVAC, etc). In order to extend these logical networks end-to-end across the fabric, the VRF that a packet belongs to is encoded into the VXLAN header so that when the (P)ETR receives the VXLAN packet, it's able to forward that packet within the correct virtual network. A second use-case would be if, for whatever reason, you need to virtualize the LISP control plane, maybe due to overlapping address space in use in the network. In SDA terminology, a VRF is referred to as a Virtual Network (VN).

One of the benefits of SDA that I mentioned at the start was host mobility. This raises an interesting question though: how are default gateways handled? When a host moves, how does it communicate with its default gateway? The answer is that the edge NEs are configured with an anycast gateway IP address which means that every edge NE is configured with the same Switch Virtual Interface (SVI) which has the same IP address and same MAC address. In essence, a host's gateway exists on every single edge switch, so no matter where that host moves to on the network, its default gateway is always right there.

Policy Plane⌗

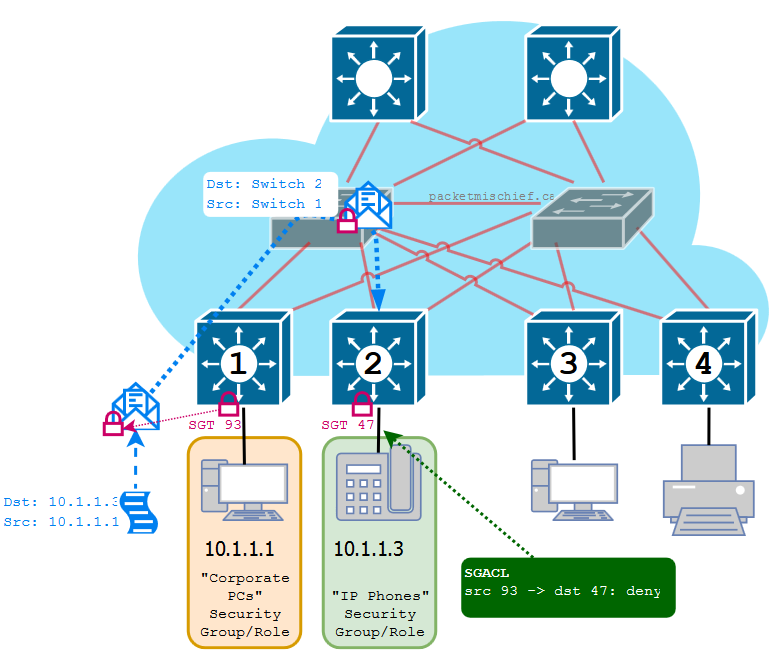

Within the network fabric itself, security policy is implemented by way of Scalable Group Tags (SGTs) and Scalable Group Access Control Lists (SGACLs).

If you've heard of or worked with Secure Group Tags, they are the same thing as Scalable Group Tags. For some reason, Marketing decided the name had to be changed when SGTs were made a part of SDA.

An SGT is a number-very much like a VLAN tag-that is assigned to a {user,device} tuple based on the security group or role that they are assigned to. For example, if I log onto the network with my work-issued laptop, I may be assigned to the "Corporate Users" role which uses an SGT of 5. If I also grab my personal iPad and log onto the network, I may be assigned to the "BYOD Users" role which uses an SGT of 13. As each device attaches to the fabric, the edge NE will be instructed which tag to assign to that {user,device} pair (more on that in a bit). When the device sends packets into the network, the edge NE tags the packets with the assigned SGT by placing the SGT into the VXLAN header. These SGTs are preserved end-to-end within the fabric so that as the packets are received by the egress NE, it knows what security group and/or role the sender belongs to. When the egress NE attempts to forward the packet out of the fabric and towards its final destination, it first looks up the SGT assigned on the destination port and then consults its SGACL: is this source SGT allowed to talk to this destination SGT?

The mapping of SGT to {user,device} and the SGACL are downloaded to the network elements from the Cisco Identity Services Engine (ISE). ISE is a sophisticated policy engine that performs authentication and authorization of devices and users as they attach to the network. ISE can examine the rich context surrounding a {user,device} attaching to the network, including type of device (laptop, iPad), device ownership (corporate, personal), location (headquarters, field office), time of day, and much, much more. Based on this examination, ISE will classify the {user,device} as belonging to a pre-defined security group and will propagate that group's SGT to the NE where the user has attached.

ISE takes care of assigning SGTs to groups automatically. While you are free to allocated a specific SGT number to a group if you desire, it's not a requirement; ISE will manage allocation of the tags for you. Unless you're really getting into the weeds of SDA, you never need to know what the actual SGT tag is. You should always end up dealing with friendly security group names.

The SGACL is just like an IP ACL except it uses source/destination SGTs instead of source/destination IP addresses. The SGACL is built within the SDA management tool: the Digital Network Architecture Center (DNA-C). Using the graphical, web-based interface to DNA-C, the concepts of SGTs and SGACLs are abstracted away and the administrator simply defines a policy such as "the Accounting Dept may not exchange traffic with the HR Dept". Under the covers, DNA-C and ISE work together to build the resulting SGTs, SGACLs, and network policy.

More Reading⌗

This post is the first in what I plan to make into a series of posts on Software Defined Access. As more posts are published, I will link them here. For now, please enjoy these posts on related protocols that are used in SDA.

Disclaimer: The opinions and information expressed in this blog article are my own and not necessarily those of Cisco Systems.