IAM is the Perimeter

A colleague of mine recently quiped, "'The perimeter' in AWS is actually defined by Identity and Access Management (IAM)." After some reflection, I think my colleague is spot on.

You see, as someone with a background in networking and who has spent the majority of their IT career (thus far) in on-premises settings, to me, "the perimeter" has always been a network-centric construct, vividly defined by firewalls, proxies, and border routers. The boundary created by "the perimeter" could not be any easier to define: If the resource, data, or system is on the "inside" or "trusted" side of the firewall, then it's inside the perimeter. Otherwise, it's outside the perimeter. That boundary between "inside" and "outside" cuts right through the firewall.

However, a network-centric paradigm of the perimeter doesn't hold in the public cloud.

On the surface, it may appear to hold because you can use constructs such as Amazon Virtual Private Cloud (VPC) to create your own private network on the cloud. And as this network clearly has a boundary between "inside" and "outside", it therefore has a vivid perimeter. However, your private network only hosts your private resources. The cloud provider's API and service endpoints exist outside your private network.

API endpoints that allow control plane actions such as "create a storage bucket" and data plane actions such as "delete this object from that storage bucket" all exist in public IP space, outside your private network on the cloud. So, by the network-centric definition of the perimeter, your resouces and data may be accessible from outside the perimeter.

In the remainder of this post I will dive deeper on the idea that the perimeter in AWS is defined by IAM and highlight some AWS IAM features you can use to enforce and define the shape of your perimeter.

You can't firewall the cloud⌗

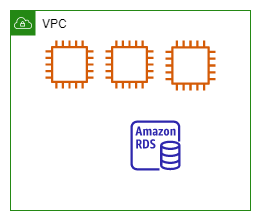

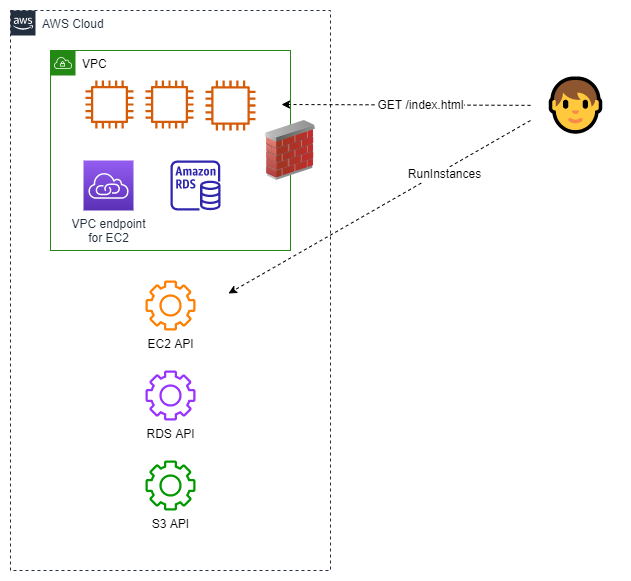

Let's look a little deeper at the network-centric view of the perimeter. Below is a simple architecture showing a VPC, a private network on the cloud, with some compute and a database instance attached to it.

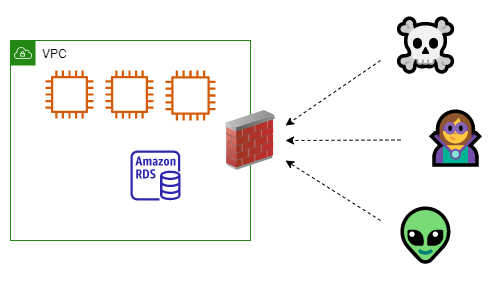

Everything inside the "VPC" box is inside the perimeter, everything else (which isn't shown in the diagram) is outside. Good. Now you can put a firewall at the VPC perimeter to act as a central policy enforcement point. A firewall is shown in the updated diagram below.

Even better. Now all traffic from "outside" must pass the firewall to reach the "inside". The resources in your VPC have a layer of protection. You could also add additional layers by using security groups and network access control lists (NACLs).

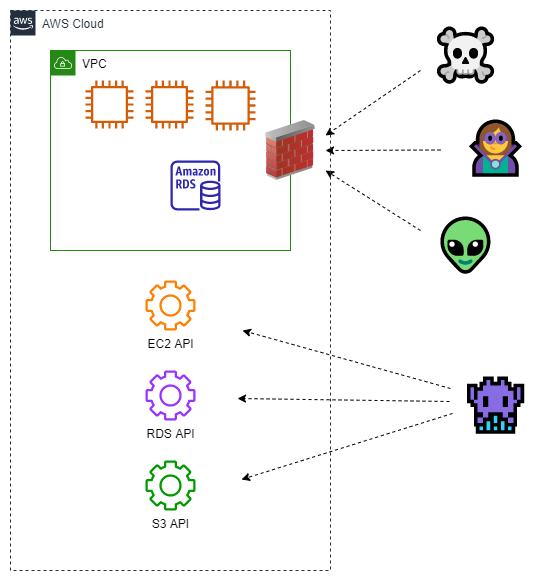

Let's complete the diagram now by adding some AWS service endpoints.

This is a more complete picture of how your cloud resources are accessed and controlled. Some resources, such as web and database servers, sit inside your VPC. The elements listed below sit outside your VPC:

- Control plane API endpoints. For example, the APIs used to create, update, and delete the web and database instances are accessed via endpoints which exist in public IP space.

- Data plane API endpoints. The APIs used to "do work" with cloud services, such as putting, getting, and deleting objects in a storage bucket, are accessed via endpoints which exist in public IP space.

You can see from the diagram above that the elements external to the VPC are not protected by the firewall nor by any security group or NACL which you manage because firewalls, security groups, and NACLs are always tied to a VPC. The network-centric controls don't affect the elements outside the VPC even though those elements provide access and control of your cloud resources and data.

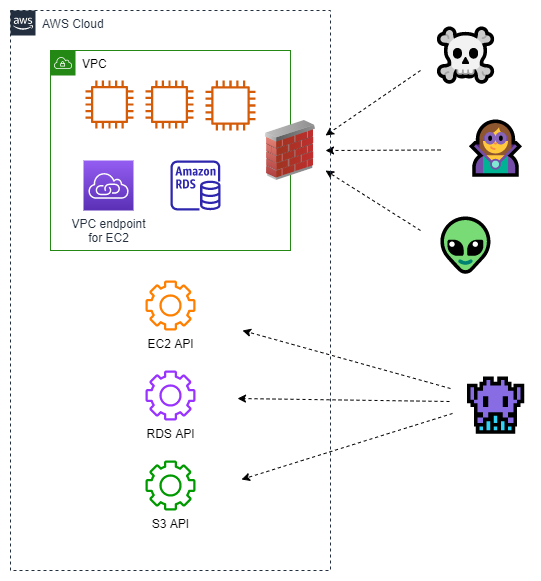

If you're experienced with AWS, you may be thinking that using VPC endpoints (powered by AWS PrivateLink) may somehow change these circumstances. VPC endpoints allow you to create an API endpoint within your VPC, avoiding the need for your resources to use the related public endpoint. Since VPC endpoints attach to your VPC, they exist within your network perimeter. The diagram below shows a VPC endpoint attached to the VPC.

VPC endpoints are a convenience for your VPC-attached resources. VPC endpoints change nothing from the perspective of anything or anyone outside the VPC. The public endpoints still exist, are still accessible, and still sit outside the network-centric perimeter.

Responsibility for the perimeter is shared⌗

If the public endpoints aren't subject to network-centric controls, who is responsible for their security? What, if any, levers do you have to secure those endpoints?

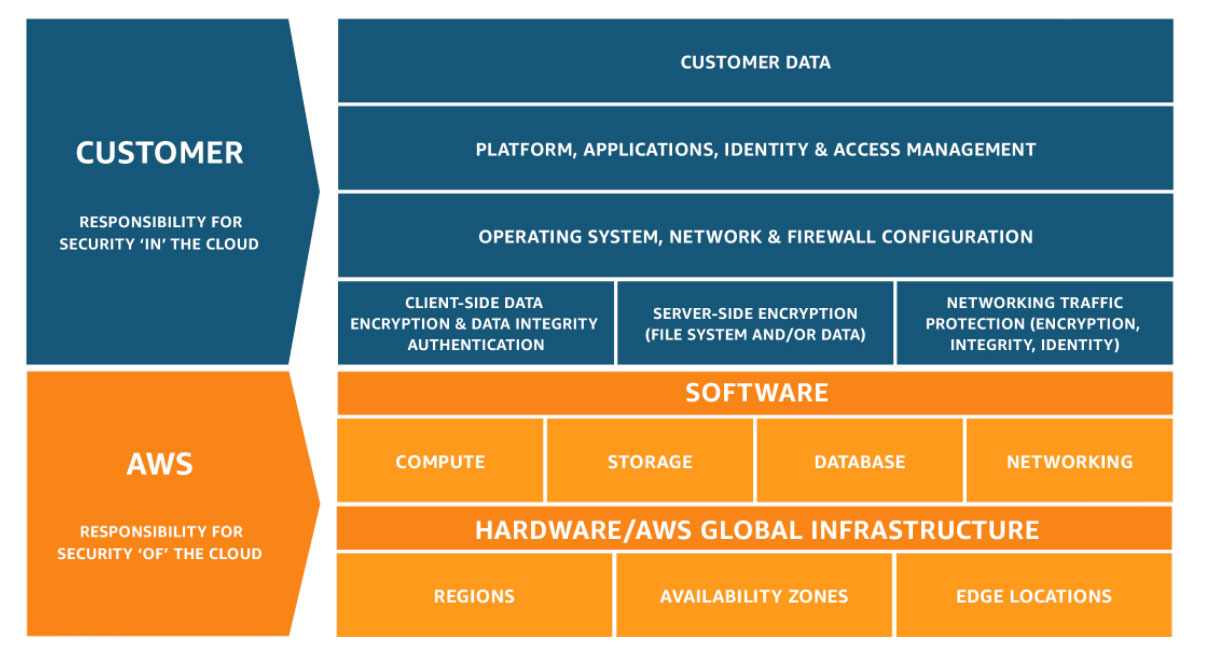

AWS uses a shared responsibility model to (a) codify the concept that security in the cloud is shared between you and AWS, and (b) describe where the line is drawn between your and AWS' responsibilities. The model is shown in the figure below.

AWS is responsible for "security of the cloud" which includes physical buildings, the compute, network, and storage systems which cloud services run on, and the software components that drive cloud services.

You are responsible for how cloud services are configured, the use/non-use of cloud features, such as encryption and logging, and IAM policy definition and application.

Under this model:

- AWS is responsible for securing API endpoint infrastructure. AWS manages and secures the hardware, manages, secures, and patches the software, monitors and responds to security events, and scales the infrastructure in response to demand. AWS delivers on these responsibilities without any intervention from you.

- You are responsible for using IAM to control use of API endpoints. How users authenticate to AWS, whether by static password or identity federation, is managed by you. Whether an API call is allowed or denied is controlled via IAM policies which are managed by you. The use of access keys and multi-factor authentication (MFA) is also managed by you.

When both parties work together under the shared responsibility model, access to resources and data via public API endpoints can be secured.

IAM policies aren't just for identities⌗

AWS IAM's reach extends beyond IAM users and roles: IAM policies can also be applied to resources.

Resource policies are IAM policies attached to a resource such as an Amazon Simple Storage Service (S3) bucket or an AWS Key Management Service (KMS) key. Resource policies make it convenient to centrally define a policy which governs access to the resource. In particular, you can use conditions to allow/deny access based on the caller's source IP address, whether or not the calling principal is part of your AWS Organizations organization, and many more conditions.

Resource policies are a powerful tool to help you define your perimeter because they (a) are defined on the resource and applied to all principals accessing the resource which can simplify management and auditing of policies, and (b) can be applied to resources which do not attach to a VPC, such as Amazon S3 buckets and AWS KMS keys. Resource policies provide a means to limit access based on network-centric properties, such as IP address, as well as IAM-centric properties, such as organization ID.

To learn more about which services support resource policies, refer to AWS services that work with IAM.

Another type of resource which supports resource policies is VPC endpoints. As mentioned above, VPC endpoints provide private access in your VPC to many AWS services. You're able to control access to services by attaching a policy, referred to as an "endpoint policy", to the endpoint. Endpoint policies allow fine-grained access control based on the IAM principal (the user or role), the resource being accessed, the action being taken, and IAM conditions such as source IP address. VPC endpoints are a powerful melding of network-centric functionality and IAM-centric access control.

Endpoint policies are the third type of policy mentioned so far. In the case where a resource such as a KMS key is being accessed via a VPC endpoint, the policy assigned to the calling user or role, the VPC endpoint policy, and the resource policy attached to the key must all allow access in order for access to be granted.

Network controls still have a place⌗

Should you eliminate your network-centric controls then? No, absolutely not.

In the diagram below, the user is making two requests: one is a call to the EC2

RunInstances API which is used to launch a compute instance and the second is a GET

request to a web server running on an EC2 instance in the VPC.

The API call is subject to IAM policy. The web request is not subject to IAM policy. Under the shared responsibility model, you are responsible for controlling access to your applications and data. The software, data, and configuration within an EC2 instance must be secured by you and one of the ways you can do that is to use network-centric controls. Based on the need, the control being used could be one or more of: security groups, NACLs, or firewalls.

Depending on the AWS service being used, the line between you and AWS in the shared responsibility model can move up, allowing you to offload more responsibility to AWS. A good example of when the line moves up is when using Amazon Relational Database Service (RDS). Unlike EC2 instances, AWS manages the software inside RDS instances. However, security is still a shared responsibility. Refer to the RDS documentation for information on how to apply the shared responsibility model to RDS.

To Recap⌗

Network-centric controls, such as firewalls, aren't able to cover the entire surface of the public cloud and are therefore inadequate at defining "the perimeter" in the cloud. AWS IAM allows you to define and manage access policies on identities, such as users and roles, and resources, such as S3 buckets, KMS keys, and VPC endpoints. These policies govern access to your resources and data and, when considered as part of the shared responsibility model, enable you to manage a more realistic security perimeter, one based on identities and their access and not on purely network-centric properties such as IP addresses.

Reference⌗

Because of the importance of IAM in defining the perimeter, it's critical to understand how to create IAM policies and understand where they can be applied. To help you increase your understanding, I recommend reading the articles below.

- Five Functional Facts about AWS Identity and Access Management (this blog)

- Five Functional Facts about AWS Service Control Policies (this blog)

- Building a Data Perimeter on AWS (AWS paper)

- Establishing a data perimeter on AWS: Allow access to company data only from expected networks (AWS blog)

- AWS IAM policy evaluation logic (AWS documentation)

- Reduce Cost and Increase Security with Amazon VPC Endpoints (AWS blog)

Disclaimer: The opinions and information expressed in this blog article are my own and not necessarily those of Amazon Web Services or Amazon, Inc.