Moving to ZFS

My file server is full and I have no options for expanding it. The server is a white box system running FreeBSD with a hardware RAID card and 400GB of RAID-5 storage. The hardware is old, the hard drives are old and I can't expand it. It's time for something new.

Table of Contents⌗

- Requirements

- ZFS as a Solution

- ZFS Bonus Features

- Operating System

- Hardware

- ZFS Configuration

- Migrating Data from FreeBSD to Solaris

- The Results

- Pictures

Requirements⌗

This is the list of requirements (in order of important) I decided the new system has to meet:

- Data integrity: Be smart enough to detect (and maybe correct) data corruption due to bad cables, failing drives, bit rot, etc.

- Data redundancy: If a drive in the array fails the data should remain safe.

- Expandability: When (not "if") the array gets full, allow capacity to be added without having to recreate the whole array.

- Performance: The system needs to keep up with streaming music, reading and writing of large ISOs and streaming of HD video files.

- Notification of hardware faults: System must detect and send notification for failed/failing drives.

- Useful management and configuration tools: The admin tools must be functional and ideally intuitive, but they do not need to be flashy point-and-click interfaces. CLI is more than ok.

ZFS as a Solution⌗

Around the time I was doing my research (early 2008), Sun was gaining a lot of ground with their Zettabyte File System (ZFS). From the Wikipedia page on ZFS:

ZFS is a combined file system and logical volume manager designed by Sun Microsystems. The features of ZFS include data integrity (protection against bit rot, etc), support for high storage capacities, integration of the concepts of filesystem and volume management, snapshots and copy-on-write clones, continuous integrity checking and automatic repair, RAID-Z and native NFSv4 ACLs. ZFS is implemented as open-source software, licensed under the Common Development and Distribution License (CDDL).

ZFS was immediately appealing because it addressed a lot of my requirements and also got me away from having to purchase an expensive RAID card since ZFS was also a volume manager. And since Sun's target market for this technology was the enterprise, I figured it would definitely do a good job for me at home.

Here's how ZFS addressed my requirements.

Data Integrity⌗

ZFS uses block-level checksums to catch and repair data corruption as data is read from the disk. When the file system is configured for mirroring or RAID, the data is automatically repaired using one of the alternate copies of the data. ZFS also uses copy-on-write for all write transactions. This ensures that the on-disk state is always valid (no need for fsck). You can also force a "scrub" of the storage pool which causes ZFS to traverse the entire pool and verify each block against its checksum and then repair it if necessary.

Data Redundancy⌗

ZFS isn't just a file system it's also a volume manager. This means you can configure stripes, mirrors and RAID sets using ZFS. Using mirrored sets or RAID-Z/RAID-Z2 (ZFS's own implementation of RAID-5 and RAID-6, respectively) provides data redundancy and protection against disk failures. ZFS also has the ability to store up to 3 copies of user data on the same physical disk. ZFS does its best to physically separate these copies so that if the drive becomes damaged there is a maximum chance that at least one copy of the data survived. This feature is above and beyond any mirroring or RAID that has been setup.

Expandability⌗

Because ZFS is acting as the volume manager, the storage array is no longer tied to a RAID card. I can combine drives that are connected to the on-board SATA ports with drives that are connected to an HBA or even drives that are connected via USB or eSATA. This allows the system to grow on demand. Instead of building a massive storage pool on day #1 by purchasing an expensive RAID card and enough drives to completely load the card up (a very expensive option) I can buy enough drives to fit my immediate, day #1 needs and grow the storage gradually. Also, I don't have to worry about what to do if I run out of SATA ports. When the on-board ports are all used, I can buy an HBA and begin plugging new drives into that. ZFS doesn't care where the drives are. You cannot expand RAID-Z/Z2/Z3.

Performance⌗

When you're planning on using SATA drives you basically get what you get. Modern CPUs are so powerful that they can easily handle the ZFS parity calculations, checksumming, and compression. The bottle neck for the typical home user will always be the SATA drives. The good news is that most home file servers are used for media (music and video) and maybe for storing large files such as ISOs or backups from other computers. These uses benefit from SATA's decent sequential read/write speeds.

Notification of Hardware Faults⌗

ZFS does have some smarts built in to detect failed drives and take corrective action. It does rely on the drive controller to report faults as well. Through checksumming ZFS can detect if a drive is serving corrupt data which can be a sign of a failing drive.

Useful Management and Configuration Tools⌗

There are exactly two commands needed to manage ZFS: zpool(1M) and zfs(1M). The syntax for each is simple and intuitive and the documentation of all the arguments and options is very good.

ZFS Bonus Features⌗

Aside from all the features in ZFS that addressed my requirements, there were a lot of others too.

Cheap (as in "free")⌗

ZFS is open source software developed by Sun and is a part of Solaris OS. Solaris is available from Sun for free with a license to use it at home or commercially.

Snapshots⌗

All ZFS file systems can be snapshotted. Snapshots are stored in the main storage pool, no need to dedicate storage for them. Because ZFS uses copy-on-write semantics, the initial size of a snapshot is zero bytes. The size only increases as data is written to/deleted from the file system that the snapshot belongs to.

Compression⌗

ZFS has built-in compression which can be enabled on a per file system basis. Instead of compressing your data using a compression utility, you can let ZFS transparently take care of it for you. The ZFS management tools also let you see what kind of compression ratio you're achieving.

Active Community⌗

Over at the OpenSolaris ZFS Community you'll find introductory information about ZFS, an overview of its features, links to mailing lists and discussion forums as well as information related to ZFS development, code and the on-disk data structure.

Operating System⌗

Since ZFS is developed by Sun I decided to run Solaris because Solaris would be the first OS to get the latest ZFS features and patches and have the largest user and support base. At the time, Sun was distributing Solaris Express Community Edition (SXCE) which was part of their open source development of the upcoming Solaris 11. SXCE was getting regular updates to ZFS and a new build was being published every two weeks. Solaris 10 was an option, however updates to Solaris 10 were more conservative (not all new features in SXCE were back ported to S10) and you had to wait months for a new Solaris Update to be released before you could take advantage of new features.

Hardware⌗

This is the list of hardware I settled on:

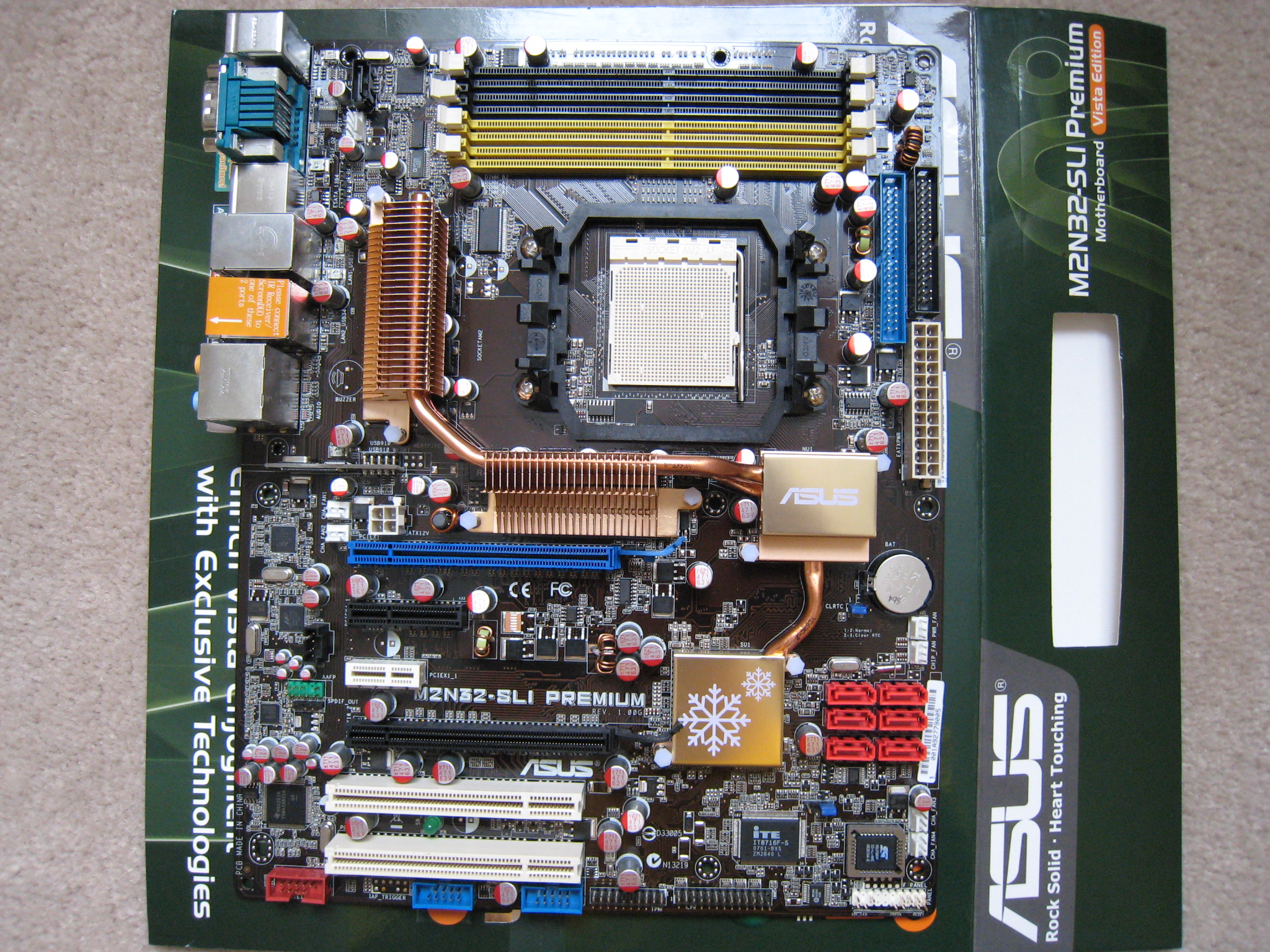

- ASUS M2N32-SLI Premium Vista Edition

- AMD Athlon(tm) 64 X2 Dual Core Processor 4000+ Socket AM2

- 2x 1GB Kingston DDR2-533 ECC

- Seagate 7200.10 160GB SATA 3Gb/s (system drive)

- 2x Seagate 7200.11 750GB SATA 3Gb/s (ZFS storage)

- A second-hand nVidia PCI graphics card

- Antec case with 350W PSU

I chose the M2N32-SLI board because it has 7 SATA 3.0Gb/s ports (plus 1 eSATA port). Most boards only have 4 ports. This allows me to install 6 drives for storage and 1 drive for the system/OS all within the case. The board also has 2x PCI, 1x PCIe x4, 1x PCIe x1 and 2x PCIe x16 slots for expansion. This board does not have on-board video which is the reason for the PCI graphics card. For storage I chose a 160GB drive for the operating system (which gives me enough room to do Live Upgrades) and two 750GB drives for storage (more on that down below).

The M2N32-SLI board is fully supported under SXCE, including the on-board NICs (nge). This board is not mentioned on the Solaris HCL but I can report everything that I use on it works just fine.

ZFS Configuration⌗

In the current version of ZFS you cannot grow a RAID-Z/Z2 pool by adding single drives. The best you can do is create a new RAID-Z/Z2 vdev and then stripe across it. This means that when it's time to upgrade you're adding a minimum of 3 drives every time (4 in the case of RAID-Z2). This is expensive and very inefficient. I decided instead to build the equivalent of a RAID10 and stripe across mirrored pairs.

The initial configuration is 2x 750GB drives setup in a mirror which gives 750GB of storage space.

| Mirror (750GB Storage) | |

|---|---|

| 750GB Drive | 750GB Drive |

As the pool becomes full I plan on adding 2x 1TB drives in a mirrored configuration and then striping across the mirrors giving the pool 1.75TB of storage.

| 1.75TB Stripe | |||

|---|---|---|---|

| 750GB Mirror | 1TB Mirror | ||

| 750GB Drive | 750GB Drive | 1TB Drive | 1TB Drive |

I can keep expanding the pool this way to grow it as large as I need.

Another advantage of using mirrors is that they have very good read performance and since most of the work this server will be doing is serving up files for reading, that'll be an immediate benefit.

Migrating Data from FreeBSD to Solaris⌗

Because there is very limited support from 3ware for Solaris drivers on their RAID cards I had to first install FreeBSD 7 (which has support for ZFS) and use that to create my new storage pool and migrate my data onto it. Since ZFS is architecture/endian agnostic, it's possible to create a ZFS pool on FreeBSD, export it, and then import it back on a Solaris system. It's even possible to export a pool on an Intel-based system and then import it on a SPARC-based system without any trouble.

The Results⌗

The new system runs very well. The was really very little effort needed to get it up and going. The hardest part was just figuring out how to migrate the data from the old RAID5 array onto the ZFS pool given the fact that there are no Solaris drives for that RAID card and that I'm reusing the chassis and power supply from the old server on the new one (can't just copy data across the network to the new machine). Most of the planning that went into the system was in trying to make the system flexible enough to grow as my storage needs increased. Time will tell if I made good choices or not.

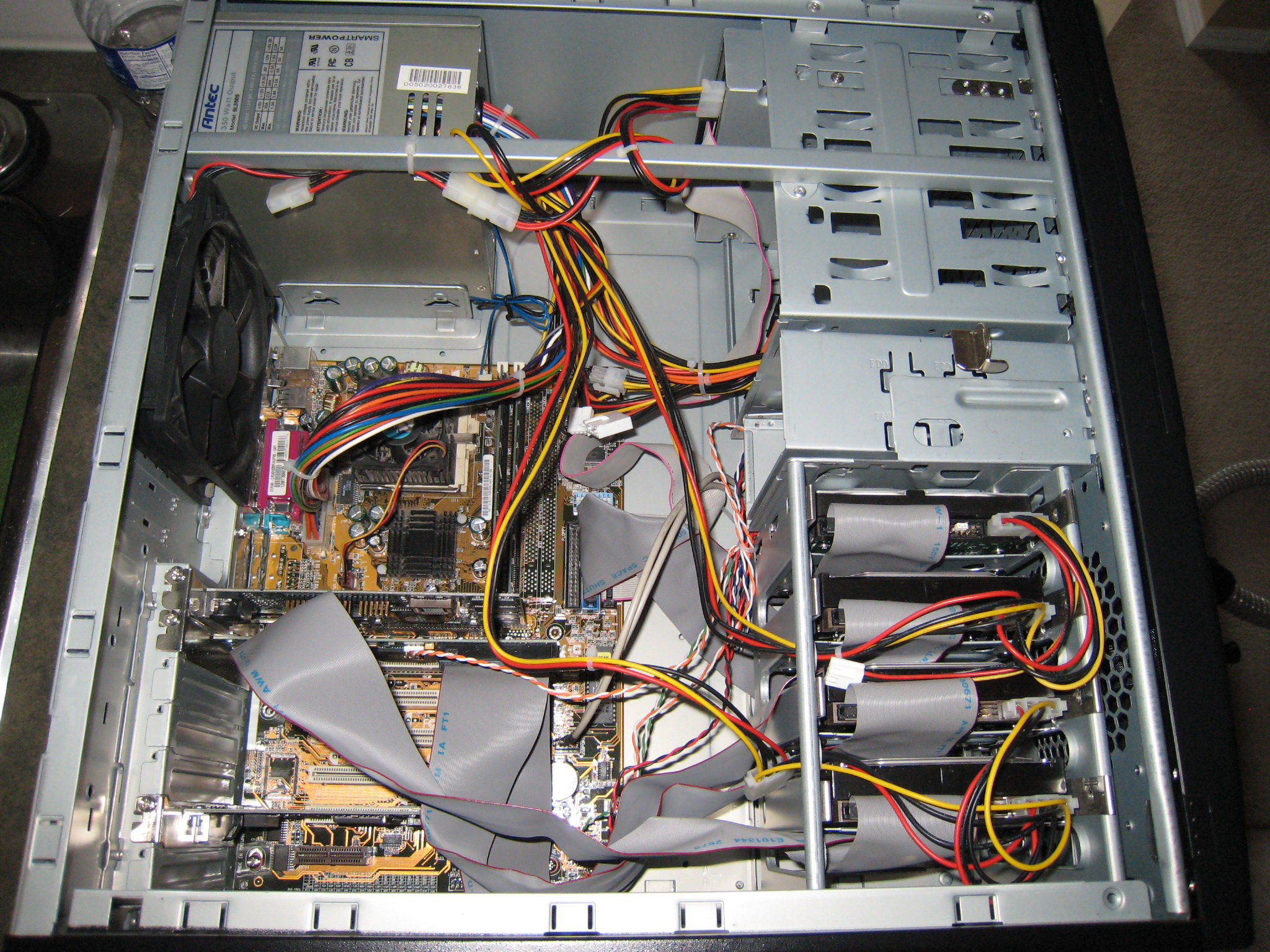

Pictures⌗